Supervised vs Unsupervised Learning: Ultimate Guide to Machine Learning Mastery

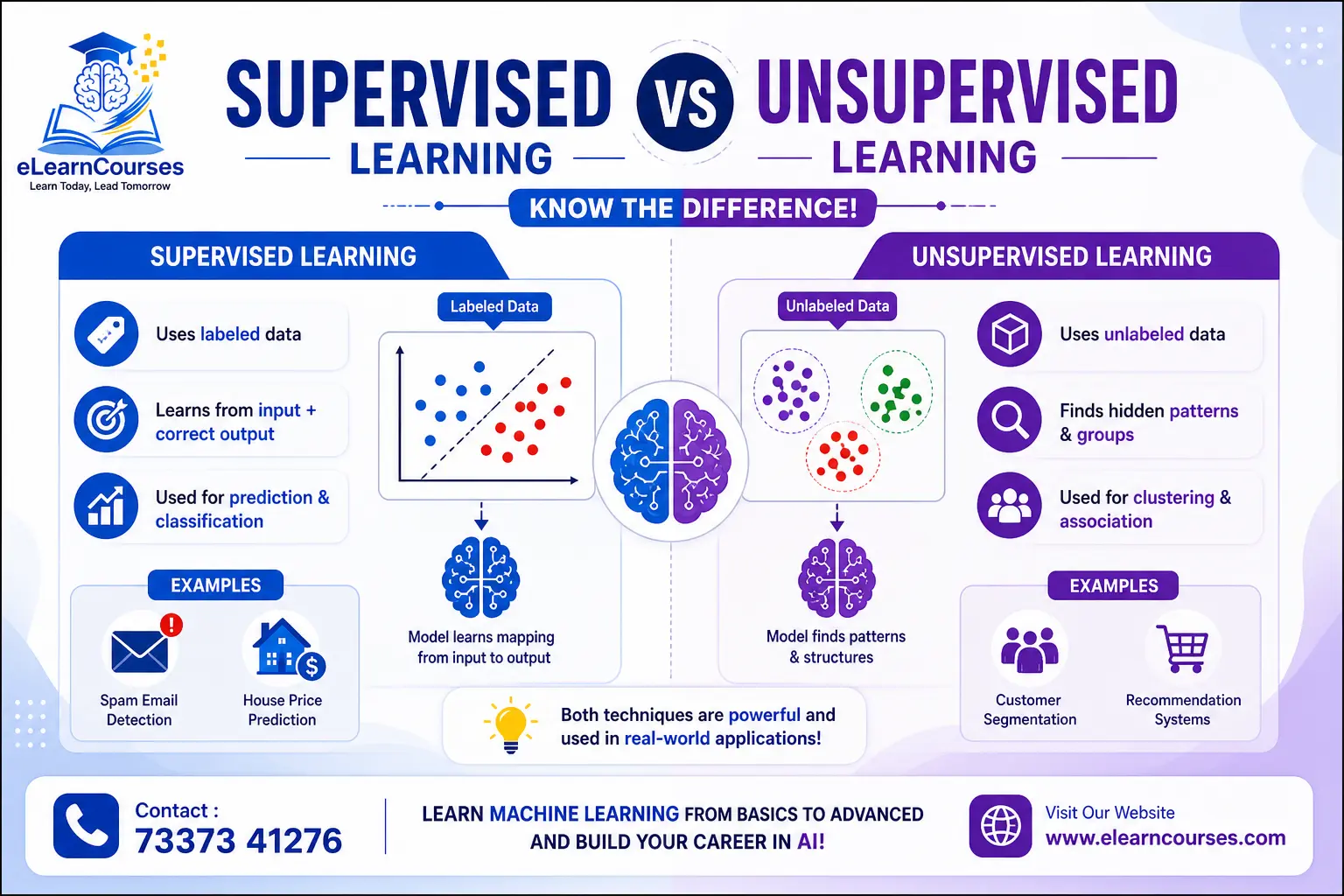

Understanding the fundamental difference between supervised vs unsupervised learning is crucial for anyone venturing into machine learning and artificial intelligence. These two paradigms represent the cornerstone approaches to training machines to learn from data, make predictions, and discover patterns. Whether you’re a data scientist choosing the right algorithm, a business leader evaluating AI solutions, or a student beginning your machine learning journey, grasping the distinction between supervised vs unsupervised learning will shape your approach to solving real-world problems.

Machine learning has transformed industries from healthcare to finance, from e-commerce to autonomous vehicles. At its core, the choice between supervised vs unsupervised learning determines how algorithms interact with data, what insights they can extract, and which problems they can solve. This comprehensive guide explores both paradigms in depth, examining their methodologies, algorithms, applications, advantages, limitations, and practical use cases to help you make informed decisions about implementing machine learning solutions.

What is Machine Learning? The Foundation of AI

Machine learning is a subset of artificial intelligence that enables computers to learn from experience without being explicitly programmed. Rather than following rigid, pre-defined rules, machine learning algorithms identify patterns in data and make decisions based on those patterns. The field emerged from pattern recognition and computational learning theory, evolving into one of today’s most transformative technologies.

The Learning Paradigm Landscape

Machine learning encompasses several learning paradigms, each suited to different types of problems and data availability. The primary categories include supervised learning, unsupervised learning, semi-supervised learning, and reinforcement learning. Among these, supervised and unsupervised learning represent the most fundamental and widely-used approaches.

The distinction between these paradigms centers on the presence or absence of labeled training data. Labeled data includes both input features and corresponding output labels (the “correct answers”), while unlabeled data contains only input features without predefined categories or target values. This fundamental difference in data structure leads to entirely different learning approaches, algorithms, and applications.

Understanding when to apply each paradigm requires considering your data characteristics, business objectives, available resources, and desired outcomes. Some problems naturally lend themselves to one approach, while others may benefit from hybrid methodologies combining multiple learning paradigms.

Supervised Learning: Learning with Guidance

Supervised learning trains algorithms using labeled datasets where each training example includes both input features and corresponding target outputs. The algorithm learns to map inputs to outputs by identifying patterns and relationships in the training data, then applies this learned mapping to make predictions on new, unseen data.

How Supervised Learning Works

The supervised learning process follows a systematic workflow. First, you collect and prepare a labeled dataset containing numerous examples of inputs paired with their correct outputs. For instance, in email classification, each email (input) would be labeled as “spam” or “not spam” (output). In house price prediction, each house description (input features like size, location, bedrooms) pairs with its actual sale price (output).

The algorithm analyzes these labeled examples, identifying patterns and relationships between input features and output labels. Through iterative training, the model adjusts its internal parameters to minimize prediction errors on the training data. Various optimization techniques like gradient descent fine-tune these parameters, gradually improving the model’s accuracy.

Once trained, the model evaluates its performance using a separate test dataset containing labeled examples the algorithm hasn’t seen during training. This evaluation reveals how well the model generalizes to new data beyond the training set. Metrics like accuracy, precision, recall, F1-score, mean squared error, or R-squared quantify performance depending on the problem type.

After successful validation, the trained model deploys to production environments where it makes predictions on genuinely new, unlabeled data. For example, a spam filter trained on historical emails classifies incoming messages, or a credit scoring model trained on past loan applications evaluates new applicants.

Types of Supervised Learning Problems

Supervised learning divides into two main categories based on the nature of the output variable: classification and regression.

Classification involves predicting discrete categorical labels. The output belongs to a finite set of predefined classes. Binary classification problems have two possible outcomes (spam/not spam, fraud/legitimate, disease/healthy), while multi-class classification problems have three or more categories (handwritten digit recognition with classes 0-9, species identification, product categorization).

Common classification algorithms include:

- Logistic Regression: Despite its name, logistic regression solves classification problems by modeling the probability of class membership. It works well for binary classification and provides interpretable results.

- Decision Trees: These algorithms create tree-like models of decisions, splitting data based on feature values. They’re intuitive, handle both numerical and categorical data, and require minimal data preprocessing.

- Random Forests: An ensemble method combining multiple decision trees, random forests reduce overfitting and generally provide more accurate predictions than single trees.

- Support Vector Machines (SVM): SVMs find optimal hyperplanes separating different classes in high-dimensional space. They excel with complex datasets and high-dimensional data.

- Naive Bayes: Based on Bayes’ theorem, these probabilistic classifiers assume feature independence. They work remarkably well for text classification despite the independence assumption rarely being true.

- K-Nearest Neighbors (KNN): This instance-based algorithm classifies data points based on the majority class among their k nearest neighbors. It’s simple and effective for small to medium datasets.

- Neural Networks: Deep learning models with multiple layers can learn complex, hierarchical patterns. They excel at image recognition, natural language processing, and other complex classification tasks.

Regression predicts continuous numerical values rather than discrete categories. The output can take any value within a range.

Common regression algorithms include:

- Linear Regression: Models linear relationships between features and target values. Simple yet powerful for many applications, particularly when relationships are approximately linear.

- Polynomial Regression: Extends linear regression by modeling non-linear relationships using polynomial features.

- Ridge and Lasso Regression: Regularized versions of linear regression that prevent overfitting by penalizing large coefficients. Ridge uses L2 regularization, while Lasso uses L1.

- Decision Tree Regression: Similar to classification trees but predicting continuous values instead of categories.

- Random Forest Regression: Ensemble method combining multiple regression trees for improved accuracy and robustness.

- Support Vector Regression (SVR): Adapts SVM principles to regression problems, finding a function that deviates from actual target values by no more than a specified margin.

- Neural Networks for Regression: Deep learning models can capture complex non-linear relationships in regression tasks.

Real-World Applications of Supervised Learning

Supervised learning powers countless applications across industries:

Healthcare and Medical Diagnosis: Algorithms trained on medical images and patient records diagnose diseases, predict patient outcomes, identify high-risk patients, and recommend treatment plans. For example, convolutional neural networks analyze X-rays, MRIs, and CT scans to detect tumors, fractures, or abnormalities with accuracy rivaling human radiologists.

Financial Services: Banks and financial institutions use supervised learning for credit scoring, fraud detection, risk assessment, algorithmic trading, and customer churn prediction. Credit scoring models trained on historical loan data predict default probability for new applicants. Fraud detection systems identify suspicious transactions by learning patterns from known fraudulent and legitimate transactions.

Marketing and Customer Analytics: Companies leverage supervised learning for customer segmentation, targeted advertising, recommendation systems, sentiment analysis, and sales forecasting. E-commerce platforms predict which products customers might purchase based on browsing history and past purchases.

Computer Vision: Image classification, object detection, facial recognition, and autonomous vehicle navigation rely heavily on supervised learning. Self-driving cars use neural networks trained on millions of labeled images to recognize pedestrians, vehicles, traffic signs, and road conditions.

Natural Language Processing: Spam filtering, sentiment analysis, language translation, chatbots, and text classification employ supervised learning algorithms. Email providers train spam filters on millions of labeled emails, learning to distinguish legitimate messages from spam.

Manufacturing and Quality Control: Predictive maintenance systems forecast equipment failures before they occur, trained on sensor data labeled with failure events. Quality control systems detect defective products by learning from images of acceptable and defective items.

Advantages of Supervised Learning

Supervised learning offers several compelling advantages:

Predictive Accuracy: When sufficient high-quality labeled data is available, supervised learning models achieve excellent prediction accuracy, often surpassing human performance in specific tasks.

Clear Evaluation Metrics: The presence of ground truth labels enables straightforward model evaluation using well-established metrics. You can quantitatively measure performance and compare different algorithms.

Well-Established Methodologies: Decades of research have produced robust algorithms, optimization techniques, and best practices for supervised learning. Extensive libraries and frameworks (scikit-learn, TensorFlow, PyTorch) make implementation accessible.

Interpretability Options: Some supervised learning algorithms (linear regression, decision trees, logistic regression) provide interpretable models where you can understand how features influence predictions. This interpretability is crucial in regulated industries like healthcare and finance.

Transfer Learning Capabilities: Pre-trained supervised learning models can transfer knowledge to related tasks, reducing training data requirements and computational costs for new applications.

Challenges and Limitations of Supervised Learning

Despite its power, supervised learning faces several challenges:

Data Labeling Requirements: Creating large labeled datasets requires significant time, expense, and expertise. Medical image labeling needs radiologists’ input, creating bottlenecks and high costs. Some applications require millions of labeled examples for adequate performance.

Label Quality Issues: Incorrect or inconsistent labels corrupt model training, leading to poor performance. Ensuring label accuracy requires careful quality control and often multiple human annotators.

Scalability Concerns: As datasets grow to millions or billions of examples, training becomes computationally expensive and time-consuming, requiring specialized hardware like GPUs or TPUs.

Overfitting Risks: Models may memorize training data rather than learning generalizable patterns, performing excellently on training data but poorly on new data. Regularization techniques, cross-validation, and careful model selection help mitigate overfitting.

Limited Adaptability: Supervised models trained on specific data distributions may perform poorly when data characteristics change (concept drift). Retraining becomes necessary as patterns evolve.

Inability to Discover Unknown Patterns: Supervised learning finds patterns related to predefined labels but cannot discover unexpected structures or categories not represented in the training labels.

Unsupervised Learning: Discovering Hidden Patterns

Unsupervised learning analyzes unlabeled data without predefined target outputs, seeking to discover inherent structure, patterns, and relationships within the data itself. Rather than learning to predict specific outcomes, unsupervised algorithms explore data organization, identify similarities and differences, and reveal hidden patterns humans might overlook.

How Unsupervised Learning Works

Unsupervised learning begins with collecting raw, unlabeled data—no target variables or correct answers accompany the input features. The algorithm explores this data independently, identifying structure based on statistical properties, distances, densities, or other mathematical criteria.

Different unsupervised algorithms employ various strategies for finding patterns. Clustering algorithms group similar data points based on feature similarity. Dimensionality reduction techniques compress high-dimensional data into lower dimensions while preserving important information. Association rule learning discovers interesting relationships between variables. Anomaly detection identifies unusual data points deviating from normal patterns.

The algorithm iteratively refines its understanding of data structure. Clustering algorithms might start with random cluster assignments, then repeatedly reassign points to clusters and update cluster characteristics until convergence. Dimensionality reduction methods optimize projections that best preserve data variance or relationships.

Evaluation in unsupervised learning is more challenging than supervised learning because no ground truth labels exist. Assessment relies on internal metrics measuring cluster cohesion and separation, preserved variance, reconstruction error, or domain expert evaluation of discovered patterns’ meaningfulness and utility.

Types of Unsupervised Learning

Unsupervised learning encompasses several major categories:

Clustering groups data points into clusters where members share similar characteristics. Points within a cluster should be more similar to each other than to points in other clusters.

Key clustering algorithms include:

- K-Means: Partitions data into k clusters by minimizing within-cluster variance. It’s fast, scalable, and widely used but requires specifying the number of clusters beforehand and assumes spherical clusters.

- Hierarchical Clustering: Builds a tree of clusters through agglomerative (bottom-up) or divisive (top-down) approaches. It doesn’t require pre-specifying cluster numbers and provides a dendrogram visualizing cluster relationships at different granularities.

- DBSCAN (Density-Based Spatial Clustering): Identifies clusters as dense regions separated by sparse regions. It discovers clusters of arbitrary shape and automatically determines cluster numbers but struggles with varying density clusters.

- Gaussian Mixture Models (GMM): Assumes data comes from a mixture of Gaussian distributions and assigns probabilities of cluster membership. It’s more flexible than K-Means, handling elliptical clusters and providing probabilistic cluster assignments.

- Mean Shift: Identifies clusters by finding modes in the feature space density. It doesn’t require specifying cluster numbers but can be computationally intensive.

Dimensionality Reduction transforms high-dimensional data into fewer dimensions while retaining essential information. This simplifies data, enables visualization, and often improves subsequent analysis.

Major dimensionality reduction techniques include:

- Principal Component Analysis (PCA): Projects data onto orthogonal components capturing maximum variance. It’s the most common dimensionality reduction technique, efficient and interpretable.

- t-SNE (t-Distributed Stochastic Neighbor Embedding): Specializes in visualizing high-dimensional data in 2D or 3D by preserving local structure. It creates beautiful visualizations but doesn’t preserve global structure or support new data projection.

- UMAP (Uniform Manifold Approximation and Projection): Similar to t-SNE but faster, more scalable, and better at preserving global structure while maintaining local relationships.

- Autoencoders: Neural networks that compress data into lower-dimensional representations (encoding) and reconstruct original data from these representations (decoding). They can capture complex non-linear patterns.

Association Rule Learning discovers interesting relationships, patterns, and associations in large datasets, particularly in transactional data.

- Apriori Algorithm: Identifies frequent itemsets and generates association rules. Retailers use it for market basket analysis, discovering which products customers frequently purchase together.

- FP-Growth: More efficient than Apriori for large datasets, using a compact data structure to find frequent patterns.

Anomaly Detection identifies unusual data points, outliers, or anomalies that deviate significantly from normal patterns.

- Isolation Forest: Isolates anomalies by randomly partitioning data. Anomalies are isolated more quickly than normal points.

- One-Class SVM: Learns a boundary around normal data, identifying points outside this boundary as anomalies.

- Local Outlier Factor (LOF): Identifies outliers based on local density deviation compared to neighbors.

Real-World Applications of Unsupervised Learning

Unsupervised learning enables diverse applications:

Customer Segmentation: Businesses cluster customers based on purchasing behavior, demographics, and preferences without predefined segments. These data-driven segments inform targeted marketing, personalized recommendations, and product development. Retailers might discover unexpected customer groups with unique needs.

Image and Video Compression: Dimensionality reduction techniques compress images and videos by representing them in fewer dimensions while maintaining visual quality. Autoencoders learn efficient compressed representations.

Anomaly Detection and Fraud Prevention: Financial institutions identify unusual transactions that might indicate fraud. Network security systems detect anomalous traffic patterns suggesting cyber attacks. Manufacturing sensors identify equipment behaving abnormally, predicting failures.

Recommendation Systems: E-commerce and streaming platforms use unsupervised learning to discover patterns in user behavior and item characteristics. Collaborative filtering identifies users with similar preferences or items frequently consumed together, enabling personalized recommendations without explicit rating labels.

Genomics and Bioinformatics: Researchers cluster genes with similar expression patterns, discover patient subgroups with different disease characteristics, and identify genetic markers. Unsupervised learning reveals biological structures and relationships not obvious through traditional analysis.

Market Basket Analysis: Retailers analyze transaction data to discover which products customers frequently purchase together. These insights inform store layouts, product placement, promotional bundling, and inventory management.

Document Organization: Unsupervised learning clusters documents by topic without predefined categories. News aggregators group related articles, research databases organize papers, and search engines improve result relevance through discovered topical structure.

Natural Language Processing: Topic modeling techniques like Latent Dirichlet Allocation (LDA) discover themes in document collections. Word embeddings (Word2Vec, GloVe) learn vector representations capturing semantic relationships between words.

Advantages of Unsupervised Learning

Unsupervised learning provides unique benefits:

No Labeling Required: The most significant advantage is eliminating expensive and time-consuming data labeling. Organizations can leverage vast amounts of unlabeled data, which is often abundantly available.

Discovery of Unknown Patterns: Unsupervised algorithms can reveal unexpected structures, relationships, and categories humans hadn’t considered. This exploratory capability leads to novel insights and discoveries.

Scalability with Data Volume: As unlabeled data is plentiful, unsupervised learning can leverage massive datasets, potentially discovering patterns that only emerge at scale.

Preprocessing and Feature Engineering: Unsupervised techniques like dimensionality reduction and clustering often serve as preprocessing steps for supervised learning, improving performance by creating better features or reducing noise.

Adaptability: Many unsupervised methods adapt naturally to new data without requiring retraining, making them suitable for evolving datasets.

Challenges and Limitations of Unsupervised Learning

Unsupervised learning faces distinct challenges:

Evaluation Difficulty: Without ground truth labels, assessing result quality is subjective and challenging. What constitutes “good” clusters or “meaningful” patterns often requires domain expertise and business context.

Interpretation Complexity: Discovered patterns may be statistically significant but not practically meaningful. Understanding and interpreting results requires careful analysis and domain knowledge.

Parameter Sensitivity: Many unsupervised algorithms require hyperparameter specification (number of clusters in K-Means, perplexity in t-SNE) that significantly affect results. Choosing appropriate values often requires trial, error, and domain expertise.

Computational Complexity: Some unsupervised methods, especially clustering algorithms on large datasets, can be computationally intensive.

Lack of Guarantee: No guarantee exists that discovered patterns align with business objectives or actionable insights. Results might be interesting but not useful.

Ambiguity in Results: Different algorithms or parameter settings may produce different clustering or pattern results, all potentially valid, creating ambiguity in choosing the “best” solution.

Supervised vs Unsupervised Learning: Direct Comparison

Understanding the key differences between supervised vs unsupervised learning helps in selecting the appropriate approach for specific problems.

Data Requirements

Supervised Learning: Requires labeled datasets where each input has a corresponding output label. Creating these labels often involves manual annotation, expert knowledge, or historical records. The quality and quantity of labeled data directly impact model performance.

Unsupervised Learning: Works with unlabeled data containing only input features without target outputs. This dramatically reduces data preparation costs and enables working with vast amounts of readily available data.

Also Read: Data Science vs Data Analytics

Learning Objectives

Supervised Learning: Aims to learn a mapping function from inputs to outputs for predicting labels on new, unseen data. The goal is predictive accuracy on a specific task defined by the labels.

Unsupervised Learning: Seeks to discover inherent structure, patterns, and relationships within data. The goal is exploratory, revealing organization and characteristics not explicitly defined beforehand.

Algorithms and Techniques

Supervised Learning: Employs classification algorithms (logistic regression, SVM, decision trees, neural networks) for categorical outputs and regression algorithms (linear regression, polynomial regression, neural networks) for continuous outputs.

Unsupervised Learning: Uses clustering algorithms (K-Means, hierarchical clustering, DBSCAN), dimensionality reduction (PCA, t-SNE, autoencoders), association rule learning (Apriori), and anomaly detection techniques.

Evaluation Methods

Supervised Learning: Evaluates using clear metrics comparing predictions to ground truth labels: accuracy, precision, recall, F1-score for classification; MSE, RMSE, R-squared for regression. Cross-validation and holdout test sets provide robust performance estimates.

Unsupervised Learning: Evaluation is more subjective and complex. Internal metrics like silhouette score or Davies-Bouldin index assess cluster quality. Often requires domain expert evaluation of whether discovered patterns are meaningful and actionable.

Use Case Scenarios

Supervised Learning: Ideal when you have specific prediction goals with labeled historical data. Use cases include spam detection, disease diagnosis, price prediction, credit scoring, and image classification—any scenario where you know what you want to predict and have examples of correct predictions.

Unsupervised Learning: Suited for exploratory analysis when you don’t know what patterns might exist. Use cases include customer segmentation without predefined categories, anomaly detection when normal behavior isn’t explicitly defined, data compression, and discovering natural groupings in data.

Computational Resources

Supervised Learning: Training complex models (deep neural networks) requires significant computational resources, especially for large labeled datasets. However, once trained, many models make predictions efficiently.

Unsupervised Learning: Computational requirements vary widely. Simple clustering may be fast, while dimensionality reduction on massive datasets can be intensive. Some methods like t-SNE don’t scale well to very large datasets.

Interpretability and Explainability

Supervised Learning: Some algorithms (linear regression, decision trees) provide transparent, interpretable models. Others (deep neural networks) are “black boxes” requiring specialized techniques for interpretation.

Unsupervised Learning: Interpretation depends on the algorithm and domain. Clustering results might be intuitive (customer segments) or cryptic (high-dimensional clusters). Understanding why certain patterns emerged requires careful analysis.

Practical Implementation

Supervised Learning: Implementation follows a structured workflow: collect labeled data, split into training/validation/test sets, train model, evaluate, tune hyperparameters, and deploy. The process is well-defined with established best practices.

Unsupervised Learning: Implementation is more exploratory and iterative. Try different algorithms, adjust parameters, evaluate results qualitatively and quantitatively, and refine based on domain knowledge. The process may involve more experimentation.

Choosing Between Supervised vs Unsupervised Learning

Selecting the right approach depends on several factors:

When to Use Supervised Learning

Choose supervised learning when:

- You have clear prediction goals with specific target variables you want to predict

- Labeled training data is available or can be obtained within reasonable time and budget constraints

- Historical data with outcomes exists (past transactions with fraud labels, historical sales data, medical diagnoses)

- Prediction accuracy is paramount and you can measure it against ground truth

- The problem fits classification or regression frameworks with well-defined input-output relationships

- Regulations require explainable decisions and you can use interpretable supervised algorithms

When to Use Unsupervised Learning

Choose unsupervised learning when:

- No labeled data is available or labeling is prohibitively expensive or time-consuming

- You need exploratory insights into data structure without knowing what patterns might exist

- The goal is discovering natural groupings or segments in data

- You want to detect anomalies without explicit examples of all anomaly types

- Dimensionality reduction is needed for visualization or preprocessing

- You’re working with massive unlabeled datasets where patterns might emerge only at scale

Hybrid Approaches

Many real-world applications benefit from combining both paradigms:

Semi-Supervised Learning: Uses a small amount of labeled data with a large amount of unlabeled data. The unlabeled data helps improve model performance beyond what’s achievable with labeled data alone. This approach works well when obtaining labels for the entire dataset is impractical but some labels are available.

Unsupervised Preprocessing: Apply unsupervised techniques (clustering, dimensionality reduction) to create features or reduce data complexity, then use supervised learning for final predictions. For example, cluster customers using unsupervised learning, then predict churn within each cluster using supervised models.

Active Learning: The model identifies which unlabeled examples would be most informative if labeled. Human experts label only these strategically selected examples, maximizing model improvement with minimal labeling effort.

Transfer Learning: Use unsupervised or supervised learning on one dataset to learn representations or patterns, then transfer this knowledge to a different but related supervised learning task with limited labeled data.

Advanced Topics in Supervised vs Unsupervised Learning

Deep Learning in Both Paradigms

Supervised Deep Learning: Convolutional Neural Networks (CNNs) for image classification, Recurrent Neural Networks (RNNs) and Transformers for sequential data, and deep feedforward networks for tabular data leverage massive labeled datasets to learn hierarchical representations. These models achieve state-of-the-art results in computer vision, natural language processing, and speech recognition.

Unsupervised Deep Learning: Autoencoders learn compressed data representations. Variational Autoencoders (VAEs) generate new data samples. Generative Adversarial Networks (GANs) learn to create realistic synthetic data (images, text, audio) by pitting two neural networks against each other. Self-supervised learning uses unlabeled data to create pseudo-labels through pretext tasks.

Reinforcement Learning: A Third Paradigm

Reinforcement learning represents a distinct learning paradigm where agents learn optimal behaviors through trial and error, receiving rewards or penalties for actions. It differs from supervised learning (no labeled examples provided) and unsupervised learning (not discovering data structure but learning policies). Applications include game playing (AlphaGo), robotics, autonomous vehicles, and resource management.

The Role of Feature Engineering

Supervised Learning: High-quality features significantly impact model performance. Domain expertise guides feature creation, combining, or transforming raw inputs into more informative representations. Automated feature engineering tools are emerging but human expertise remains valuable.

Unsupervised Learning: Features affect clustering and pattern discovery results. Proper scaling, normalization, and transformation ensure algorithms don’t bias toward features with larger magnitudes. Unsupervised methods like PCA can automatically create informative features.

Handling Imbalanced Data

Supervised Learning: Class imbalance (one class much rarer than others, like fraud detection) requires special techniques: oversampling minority class, undersampling majority class, synthetic data generation (SMOTE), or adjusting class weights in the algorithm.

Unsupervised Learning: Imbalanced data affects clustering as rare patterns might not form distinct clusters. Density-based methods (DBSCAN) often handle imbalance better than partition-based methods (K-Means).

Industry Applications: Supervised vs Unsupervised Learning in Practice

Healthcare

Supervised: Predict disease onset from patient records, classify medical images (cancer detection in mammograms), predict patient readmission risk, and recommend treatments based on similar historical cases.

Unsupervised: Discover patient subgroups with different disease progressions, cluster genes by expression patterns, detect anomalous patient vital signs indicating deterioration, and reduce dimensionality in genomic data for visualization and analysis.

Finance

Supervised: Credit scoring and loan default prediction, fraud detection in transactions, algorithmic trading based on market predictions, and customer churn prediction.

Unsupervised: Customer segmentation for targeted marketing, detecting unusual trading patterns indicating market manipulation, portfolio optimization through asset correlation analysis, and anomaly detection in financial reporting.

E-commerce and Retail

Supervised: Predict product demand for inventory management, classify customer support tickets, predict customer lifetime value, and detect fake reviews.

Unsupervised: Customer segmentation for personalized marketing, market basket analysis for product recommendations, detect anomalous purchase patterns indicating fraud, and compress product images for faster website loading.

Manufacturing

Supervised: Predict equipment failures for preventive maintenance, classify product defects from images, forecast demand for production planning, and optimize process parameters.

Unsupervised: Cluster similar defect patterns to identify root causes, detect anomalous sensor readings indicating impending failures, reduce dimensionality in quality control measurements, and discover natural product groupings.

Marketing and Advertising

Supervised: Predict click-through rates for ads, classify customer sentiment from social media, forecast campaign performance, and identify high-value customers.

Unsupervised: Segment audiences for targeted campaigns, discover topic trends in social media, cluster content for recommendations, and identify influencers through network analysis.

Best Practices for Implementing Machine Learning Projects

Data Quality and Preparation

Regardless of paradigm, data quality determines model success. Clean data by handling missing values, removing duplicates, and correcting errors. Understand data distributions, outliers, and biases. Feature scaling ensures algorithms treating all features fairly. Split data properly for supervised learning (training, validation, test sets) to prevent overfitting and obtain unbiased performance estimates.

Model Selection and Validation

Start with simple models as baselines before trying complex ones. For supervised learning, use cross-validation to robustly estimate performance. For unsupervised learning, try multiple algorithms and parameter settings, evaluating results both quantitatively and qualitatively. Document experiments, parameters, and results for reproducibility.

Avoiding Common Pitfalls

Data Leakage: Ensure test data doesn’t influence training. Information from test sets leaking into training creates artificially inflated performance estimates that don’t generalize.

Overfitting: Models performing excellently on training data but poorly on test data have overfitted. Use regularization, cross-validation, and simpler models to prevent overfitting.

Underfitting: Models performing poorly on both training and test data are too simple to capture data patterns. Increase model complexity or engineer better features.

Ignoring Domain Knowledge: Statistical models can find spurious correlations. Incorporate domain expertise to ensure discovered patterns make practical sense.

Ethical Considerations

Machine learning models can perpetuate or amplify biases present in training data. Ensure training data represents diverse populations fairly. Evaluate models for disparate impact across demographic groups. Consider privacy implications, especially with sensitive data. Implement transparency and explainability where decisions affect individuals significantly. Establish human oversight for high-stakes applications.

Future Trends in Supervised and Unsupervised Learning

Self-Supervised Learning

An emerging paradigm using unlabeled data to create pseudo-labels through carefully designed pretext tasks. Models learn representations by predicting parts of data from other parts (predicting next word in a sentence, colorizing grayscale images). These learned representations transfer to downstream supervised tasks with minimal fine-tuning, combining unsupervised data abundance with supervised performance.

Few-Shot and Zero-Shot Learning

Techniques enabling models to learn from very few examples (few-shot) or generalize to entirely new categories without any training examples (zero-shot). This reduces labeled data requirements dramatically, making machine learning more accessible and applicable to specialized domains with limited data.

Automated Machine Learning (AutoML)

Tools and platforms automating model selection, hyperparameter tuning, and feature engineering. AutoML democratizes machine learning, enabling non-experts to build effective models. However, domain expertise remains crucial for problem formulation, data preparation, and result interpretation.

Explainable AI (XAI)

Growing emphasis on making model decisions interpretable and explainable, especially in regulated industries and high-stakes applications. Techniques like SHAP values, LIME, and attention mechanisms provide insights into why models make specific predictions, building trust and enabling error diagnosis.

Edge Computing and Federated Learning

Deploying machine learning models on edge devices (smartphones, IoT sensors) for real-time inference without cloud connectivity. Federated learning trains models across decentralized devices without centralizing data, preserving privacy while leveraging distributed data sources.

Quantum Machine Learning

Exploring quantum computing’s potential to accelerate machine learning algorithms, particularly for certain optimization problems and pattern recognition tasks. While still largely theoretical, quantum machine learning may revolutionize computational possibilities.

Conclusion: Mastering Both Paradigms

Understanding supervised vs unsupervised learning is fundamental to leveraging machine learning effectively. These paradigms are not competitors but complementary tools in the machine learning toolkit. Supervised learning excels at prediction tasks with clear targets and labeled data, while unsupervised learning discovers hidden patterns and structures in unlabeled data.

Success in machine learning requires understanding when to apply each paradigm, sometimes combining both in hybrid approaches. As you embark on machine learning projects, carefully consider your data availability, business objectives, and resource constraints. Start with clear problem definition, ensure data quality, choose appropriate algorithms, rigorously evaluate results, and iterate based on findings.

The field continues evolving rapidly with new techniques, algorithms, and applications emerging constantly. Stay curious, keep learning, and experiment with both supervised and unsupervised approaches. The distinction between supervised vs unsupervised learning provides the foundation, but mastery comes from practical experience applying these concepts to real-world problems.

Whether you’re building recommendation systems, detecting fraud, segmenting customers, predicting equipment failures, or discovering new drug candidates, understanding these fundamental learning paradigms will guide you toward effective solutions. The choice between supervised vs unsupervised learning shapes your entire approach, from data collection through model deployment, making this knowledge essential for any practitioner in the field of artificial intelligence and machine learning.

Resources for Continued Learning

Deepen your understanding through online courses from platforms like Coursera, edX, and Udacity offering comprehensive machine learning specializations. Andrew Ng’s Machine Learning course provides excellent foundations in both paradigms. Read foundational texts like “Pattern Recognition and Machine Learning” by Christopher Bishop, “The Elements of Statistical Learning” by Hastie, Tibshirani, and Friedman, and “Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow” by Aurélien Géron.

Participate in practical projects through Kaggle competitions, applying both supervised and unsupervised learning to real datasets. Contribute to open-source machine learning projects, gaining experience with production systems. Follow research developments through arXiv papers, conference proceedings (NeurIPS, ICML, CVPR), and machine learning blogs.

Join communities like r/MachineLearning on Reddit, Stack Overflow for technical questions, and local meetup groups for networking and knowledge sharing. Attend conferences, workshops, and webinars to stay current with the latest developments and connect with practitioners and researchers.

The journey to machine learning mastery is ongoing. Technologies evolve, new algorithms emerge, and applications expand into new domains. Your investment in understanding supervised vs unsupervised learning provides the foundation for continuous growth in this transformative field. Apply these concepts to real problems, learn from failures and successes, and contribute to the growing ecosystem of intelligent applications shaping our future.