Types of Machine Learning Algorithms: The Ultimate Proven Guide to Master Every ML Algorithm in 2026

If you’ve ever wondered how Netflix knows exactly which movie you’ll love, how your bank detects fraudulent transactions in milliseconds, how Google Translate converts languages with stunning accuracy, or how a self-driving car navigates complex traffic — the answer lies in one powerful concept: machine learning algorithms.

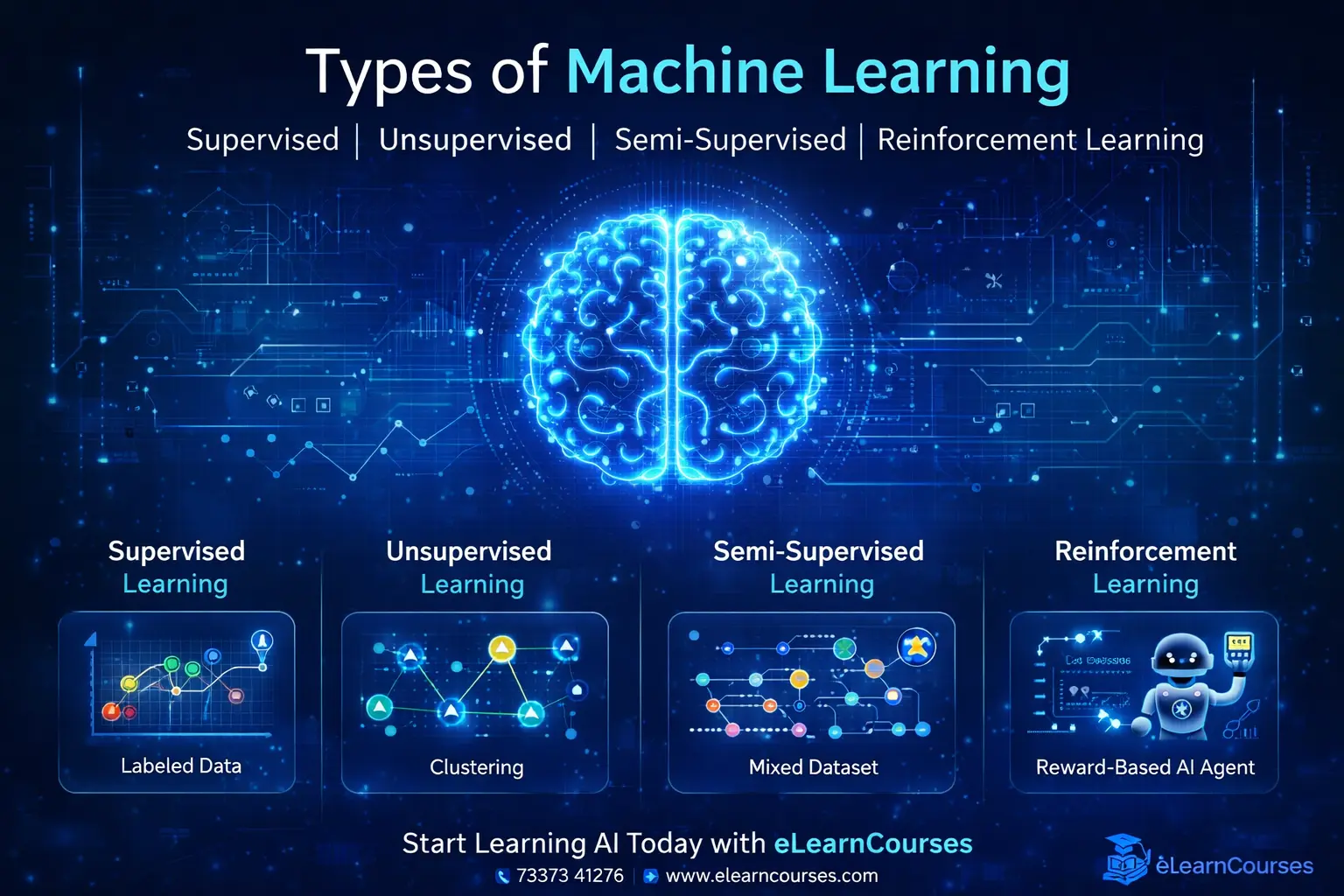

But here’s what most beginners don’t realize: there isn’t just one type of machine learning algorithm. There are dozens — each designed to solve specific kinds of problems, each with its own strengths, weaknesses, and ideal use cases. Understanding the types of machine learning algorithms is not just academic knowledge — it’s the practical foundation that separates a developer who can apply ML superficially from one who can choose the right tool for the right problem and build systems that truly work.

This ultimate guide covers all major types of machine learning algorithms in comprehensive depth — organized by learning paradigm and problem type. For each algorithm, you’ll find a clear explanation of how it works, when to use it, its advantages and disadvantages, real-world applications, and Python code examples. Whether you’re a beginner taking your first steps into ML or an intermediate practitioner looking to solidify your understanding, this guide delivers everything you need.

The types of machine learning algorithms covered in this guide include supervised learning algorithms (regression and classification), unsupervised learning algorithms (clustering, dimensionality reduction, and association), semi-supervised algorithms, reinforcement learning, and ensemble methods. We also cover how to choose the right algorithm for your specific problem.

Let’s dive in.

The Big Picture — How Machine Learning Algorithms Are Classified

Before exploring individual algorithms, it’s essential to understand the framework for classifying the types of machine learning algorithms. ML algorithms are primarily organized by how they learn:

Types of Machine Learning Algorithms

│

├── 1. Supervised Learning Algorithms

│ ├── Regression Algorithms (predict continuous values)

│ │ ├── Linear Regression

│ │ ├── Polynomial Regression

│ │ ├── Ridge & Lasso Regression

│ │ └── Support Vector Regression (SVR)

│ │

│ └── Classification Algorithms (predict categories)

│ ├── Logistic Regression

│ ├── Decision Trees

│ ├── Random Forest

│ ├── Support Vector Machine (SVM)

│ ├── K-Nearest Neighbors (KNN)

│ ├── Naive Bayes

│ └── Neural Networks

│

├── 2. Unsupervised Learning Algorithms

│ ├── Clustering Algorithms

│ │ ├── K-Means Clustering

│ │ ├── DBSCAN

│ │ └── Hierarchical Clustering

│ │

│ ├── Dimensionality Reduction

│ │ ├── Principal Component Analysis (PCA)

│ │ ├── t-SNE

│ │ └── Autoencoders

│ │

│ └── Association Rule Learning

│ ├── Apriori Algorithm

│ └── FP-Growth

│

├── 3. Semi-Supervised Learning Algorithms

│

├── 4. Reinforcement Learning Algorithms

│ ├── Q-Learning

│ ├── Deep Q-Network (DQN)

│ └── Policy Gradient Methods

│

└── 5. Ensemble Learning Algorithms

├── Bagging (Random Forest)

├── Boosting (XGBoost, LightGBM, AdaBoost)

└── StackingPART 1: Supervised Learning Algorithms

Supervised learning algorithms are trained on labeled data — every training example includes both the input features and the correct output label. The algorithm learns the mapping from inputs to outputs and can then make predictions on new, unseen data.

Supervised learning is divided into two sub-categories: Regression (predicting continuous values) and Classification (predicting discrete categories).

Section A: Regression Algorithms

1. Linear Regression

What It Is: Linear Regression is the simplest and most foundational regression algorithm. It models the relationship between one or more input features and a continuous output variable by fitting a straight line (or hyperplane in multiple dimensions) through the data.

The Equation:

y = β₀ + β₁x₁ + β₂x₂ + ... + βₙxₙ + εWhere y is the predicted value, β values are coefficients (learned from data), x values are input features, and ε is error.

How It Learns: Linear Regression minimizes the Sum of Squared Errors (SSE) — the sum of the squared differences between actual and predicted values. This is called the Ordinary Least Squares (OLS) method.

When to Use:

- Relationship between features and target is approximately linear

- You need an interpretable model (coefficients show feature influence)

- As a baseline before trying complex models

Advantages:

- Fast to train and predict

- Highly interpretable — coefficients directly show feature impact

- Works well when assumptions hold

- Good baseline model

Disadvantages:

- Assumes linear relationship (real data is often non-linear)

- Sensitive to outliers

- Poor performance on complex, high-dimensional data

- Assumes no multicollinearity between features

Real-World Applications:

- House price prediction based on size, location, amenities

- Sales forecasting based on advertising spend

- Temperature prediction based on historical weather data

- Medical dosage calculation based on patient weight/age

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_squared_error, r2_score

from sklearn.preprocessing import StandardScaler

# Generate realistic house price dataset

np.random.seed(42)

n = 300

house_size = np.random.normal(1500, 400, n) # Square feet

bedrooms = np.random.randint(1, 6, n)

age = np.random.randint(1, 50, n)

location_score = np.random.uniform(1, 10, n)

# Price depends on all features + noise

price = (

150 * house_size +

15000 * bedrooms -

800 * age +

20000 * location_score +

np.random.normal(0, 15000, n)

)

# Create DataFrame

df = pd.DataFrame({

'house_size': house_size,

'bedrooms': bedrooms,

'age': age,

'location_score': location_score,

'price': price

})

X = df.drop('price', axis=1)

y = df['price']

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

# Train Linear Regression

lr = LinearRegression()

lr.fit(X_train, y_train)

y_pred = lr.predict(X_test)

print("=== LINEAR REGRESSION — HOUSE PRICE PREDICTION ===")

print(f"\nModel Coefficients:")

for feature, coef in zip(X.columns, lr.coef_):

print(f" {feature}: ${coef:,.2f}")

print(f" Intercept: ${lr.intercept_:,.2f}")

print(f"\nR² Score: {r2_score(y_test, y_pred):.4f}")

print(f"RMSE: ${np.sqrt(mean_squared_error(y_test, y_pred)):,.2f}")

# Actual vs Predicted plot

plt.figure(figsize=(10, 6))

plt.scatter(y_test, y_pred, alpha=0.6, color='steelblue', edgecolors='white')

plt.plot([y_test.min(), y_test.max()],

[y_test.min(), y_test.max()],

'r--', linewidth=2, label='Perfect Prediction')

plt.xlabel('Actual Price ($)')

plt.ylabel('Predicted Price ($)')

plt.title('Linear Regression: Actual vs Predicted House Prices')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()2. Polynomial Regression

What It Is: Polynomial Regression extends Linear Regression by adding polynomial features — allowing it to model non-linear relationships between features and the target variable.

The Equation:

y = β₀ + β₁x + β₂x² + β₃x³ + ... + βₙxⁿWhen to Use:

- Data shows a curved, non-linear relationship

- Linear regression underfits the data

- When you can see a polynomial pattern in your scatter plot

from sklearn.preprocessing import PolynomialFeatures

from sklearn.pipeline import Pipeline

# Generate non-linear data

X_nonlinear = np.linspace(-3, 3, 100).reshape(-1, 1)

y_nonlinear = 2 * X_nonlinear**3 - 5 * X_nonlinear**2 + X_nonlinear + np.random.normal(0, 2, (100, 1))

X_tr, X_te, y_tr, y_te = train_test_split(

X_nonlinear, y_nonlinear.ravel(), test_size=0.2, random_state=42

)

plt.figure(figsize=(15, 5))

degrees = [1, 3, 8]

for i, degree in enumerate(degrees, 1):

pipeline = Pipeline([

('poly', PolynomialFeatures(degree=degree)),

('linear', LinearRegression())

])

pipeline.fit(X_tr, y_tr)

y_pred_plot = pipeline.predict(X_nonlinear)

train_r2 = r2_score(y_tr, pipeline.predict(X_tr))

test_r2 = r2_score(y_te, pipeline.predict(X_te))

plt.subplot(1, 3, i)

plt.scatter(X_nonlinear, y_nonlinear, alpha=0.5, color='steelblue', s=30)

plt.plot(X_nonlinear, y_pred_plot, color='red', linewidth=2)

plt.title(f'Degree {degree}\nTrain R²={train_r2:.3f} | Test R²={test_r2:.3f}')

plt.grid(True, alpha=0.3)

plt.suptitle('Polynomial Regression: Underfitting vs Good Fit vs Overfitting',

fontsize=13, y=1.02)

plt.tight_layout()

plt.show()3. Ridge and Lasso Regression (Regularized Linear Models)

What They Are: Ridge and Lasso are regularized versions of Linear Regression that add a penalty term to the loss function to prevent overfitting by constraining coefficient magnitudes.

- Ridge Regression (L2): Adds penalty proportional to the square of coefficients → shrinks coefficients toward zero but rarely to exactly zero

- Lasso Regression (L1): Adds penalty proportional to the absolute value of coefficients → can shrink coefficients to exactly zero (automatic feature selection)

- Elastic Net: Combines both L1 and L2 penalties

When to Use:

- Dataset has many features with potential multicollinearity (Ridge)

- Feature selection is important — want sparse models (Lasso)

- High-dimensional data (many features, relatively fewer samples)

from sklearn.linear_model import Ridge, Lasso, ElasticNet

from sklearn.preprocessing import StandardScaler

# Compare models on house price data

models_reg = {

'Linear Regression': LinearRegression(),

'Ridge (α=1.0)': Ridge(alpha=1.0),

'Lasso (α=0.001)': Lasso(alpha=0.001),

'Elastic Net': ElasticNet(alpha=0.001, l1_ratio=0.5)

}

scaler = StandardScaler()

X_train_sc = scaler.fit_transform(X_train)

X_test_sc = scaler.transform(X_test)

print("=== REGULARIZATION COMPARISON ===")

print(f"{'Model':<25} {'Train R²':>10} {'Test R²':>10} {'Non-zero Coefs':>15}")

print("-" * 65)

for name, model in models_reg.items():

model.fit(X_train_sc, y_train)

train_r2 = r2_score(y_train, model.predict(X_train_sc))

test_r2 = r2_score(y_test, model.predict(X_test_sc))

if hasattr(model, 'coef_'):

nonzero = np.sum(np.abs(model.coef_) > 1e-6)

else:

nonzero = len(X.columns)

print(f"{name:<25} {train_r2:>10.4f} {test_r2:>10.4f} {nonzero:>15}")Section B: Classification Algorithms

4. Logistic Regression

What It Is: Despite its name, Logistic Regression is a classification algorithm. It uses the sigmoid function to transform a linear combination of features into a probability between 0 and 1, which is then thresholded to produce a class prediction.

The Sigmoid Function:

σ(z) = 1 / (1 + e^(-z))This S-shaped curve maps any real number to a probability between 0 and 1.

Types:

- Binary Logistic Regression — Two classes (spam/not spam, disease/healthy)

- Multinomial Logistic Regression — Three or more classes without natural order

- Ordinal Logistic Regression — Classes with natural ordering (low/medium/high)

When to Use:

- Binary or multi-class classification

- When you need probability estimates alongside class predictions

- As a fast, interpretable baseline classifier

- When the relationship between features and log-odds is approximately linear

Real-World Applications:

- Email spam detection

- Credit default prediction

- Disease diagnosis (diabetic/non-diabetic)

- Customer churn prediction

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import (classification_report, confusion_matrix,

roc_auc_score, roc_curve)

import seaborn as sns

# Credit risk classification dataset

np.random.seed(42)

n = 1000

credit_score = np.random.normal(650, 100, n).clip(300, 850)

income = np.random.normal(50000, 20000, n).clip(15000, 200000)

debt_ratio = np.random.uniform(0.1, 0.9, n)

num_accounts = np.random.randint(1, 15, n)

payment_history = np.random.uniform(0, 1, n)

# Default probability (realistic)

default_prob = 1 / (1 + np.exp(

0.008 * credit_score -

0.00002 * income -

2 * payment_history +

1.5 * debt_ratio - 2

))

default = (np.random.random(n) < default_prob).astype(int)

credit_df = pd.DataFrame({

'credit_score': credit_score,

'income': income,

'debt_ratio': debt_ratio,

'num_accounts': num_accounts,

'payment_history': payment_history,

'default': default

})

X_cr = credit_df.drop('default', axis=1)

y_cr = credit_df['default']

X_tr, X_te, y_tr, y_te = train_test_split(

X_cr, y_cr, test_size=0.2, random_state=42, stratify=y_cr

)

sc = StandardScaler()

X_tr_sc = sc.fit_transform(X_tr)

X_te_sc = sc.transform(X_te)

log_reg = LogisticRegression(random_state=42, max_iter=1000)

log_reg.fit(X_tr_sc, y_tr)

y_pred_lr = log_reg.predict(X_te_sc)

y_prob_lr = log_reg.predict_proba(X_te_sc)[:, 1]

print("=== LOGISTIC REGRESSION — CREDIT DEFAULT PREDICTION ===")

print(f"Accuracy: {(y_pred_lr == y_te).mean():.4f}")

print(f"AUC-ROC: {roc_auc_score(y_te, y_prob_lr):.4f}")

print("\nClassification Report:")

print(classification_report(y_te, y_pred_lr,

target_names=['No Default', 'Default']))

# Confusion Matrix

cm = confusion_matrix(y_te, y_pred_lr)

plt.figure(figsize=(7, 5))

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues',

xticklabels=['No Default', 'Default'],

yticklabels=['No Default', 'Default'])

plt.title('Logistic Regression — Confusion Matrix')

plt.ylabel('Actual')

plt.xlabel('Predicted')

plt.tight_layout()

plt.show()5. Decision Tree Algorithm

What It Is: A Decision Tree is a flowchart-like structure that makes decisions by recursively splitting data based on feature values. At each internal node, the algorithm asks a question about a feature. Branches represent answers. Leaf nodes represent final predictions.

Splitting Criteria:

- Gini Impurity — Measures how often a randomly chosen element would be incorrectly classified (default in scikit-learn)

- Information Gain (Entropy) — Measures the reduction in uncertainty after a split

- Variance Reduction — For regression trees

How the Tree is Built:

- Start with all data at the root node

- Find the feature and threshold that best separates classes (minimizes impurity)

- Split data into two branches

- Recursively repeat for each branch

- Stop when a stopping criterion is met (max depth, min samples, pure leaf)

When to Use:

- When model interpretability is critical (healthcare, finance, legal)

- Non-linear relationships in data

- Mixed feature types (numerical and categorical)

- As a building block for ensemble methods

Advantages:

- Highly interpretable — easy to explain decisions

- No feature scaling required

- Handles both numerical and categorical features

- Captures non-linear relationships

- Fast prediction

Disadvantages:

- Prone to overfitting (especially deep trees)

- High variance — small data changes create different trees

- Biased toward features with more categories

- Not suitable for extrapolation

from sklearn.tree import DecisionTreeClassifier, plot_tree, export_text

from sklearn.datasets import load_iris

# Load Iris dataset

iris = load_iris()

X_iris, y_iris = iris.data, iris.target

feature_names = iris.feature_names

class_names = iris.target_names

X_tr_i, X_te_i, y_tr_i, y_te_i = train_test_split(

X_iris, y_iris, test_size=0.25, random_state=42

)

# Train with depth limit to prevent overfitting

dt = DecisionTreeClassifier(

max_depth=4,

min_samples_split=5,

min_samples_leaf=3,

criterion='gini',

random_state=42

)

dt.fit(X_tr_i, y_tr_i)

y_pred_dt = dt.predict(X_te_i)

print("=== DECISION TREE — IRIS CLASSIFICATION ===")

print(f"Training Accuracy: {dt.score(X_tr_i, y_tr_i):.4f}")

print(f"Test Accuracy: {dt.score(X_te_i, y_te_i):.4f}")

print(f"Tree Depth: {dt.get_depth()}")

print(f"Number of Leaves: {dt.get_n_leaves()}")

print("\nFeature Importances:")

for fname, imp in sorted(

zip(feature_names, dt.feature_importances_),

key=lambda x: x[1], reverse=True

):

print(f" {fname}: {imp:.4f}")

# Visualize Decision Tree

plt.figure(figsize=(24, 12))

plot_tree(dt,

feature_names=feature_names,

class_names=class_names,

filled=True,

rounded=True,

fontsize=9,

impurity=True)

plt.title('Decision Tree — Iris Classification', fontsize=16)

plt.tight_layout()

plt.show()

# Text representation

print("\nDecision Tree Rules:")

print(export_text(dt, feature_names=list(feature_names)))6. Random Forest Algorithm

What It Is: Random Forest is a powerful ensemble algorithm that builds a large number of decision trees and combines their predictions. Two key randomization techniques make each tree diverse:

- Bootstrap Sampling (Bagging) — Each tree is trained on a random sample (with replacement) of the training data

- Random Feature Selection — At each split, only a random subset of features is considered

Why It Works — The Wisdom of Crowds: Individual decision trees are high-variance models — they memorize training data easily. But when you combine many diverse, uncorrelated trees:

- Each tree makes different errors

- Errors cancel out across the ensemble

- The aggregate prediction is far more accurate and stable

When to Use:

- Most tabular data problems as a strong baseline

- When you need robust performance without much tuning

- When feature importance ranking is needed

- When the dataset has mixed feature types and potential noise

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import cross_val_score

import warnings

warnings.filterwarnings('ignore')

# Extend iris with more classes

from sklearn.datasets import load_wine

wine = load_wine()

X_w, y_w = wine.data, wine.target

X_tr_w, X_te_w, y_tr_w, y_te_w = train_test_split(

X_w, y_w, test_size=0.2, random_state=42

)

# Compare single tree vs random forest

dt_single = DecisionTreeClassifier(random_state=42)

rf_model = RandomForestClassifier(

n_estimators=100,

max_depth=10,

min_samples_split=5,

max_features='sqrt',

random_state=42,

n_jobs=-1

)

print("=== DECISION TREE vs RANDOM FOREST — WINE CLASSIFICATION ===")

for name, model in [('Decision Tree', dt_single), ('Random Forest', rf_model)]:

model.fit(X_tr_w, y_tr_w)

train_acc = model.score(X_tr_w, y_tr_w)

test_acc = model.score(X_te_w, y_te_w)

cv_scores = cross_val_score(model, X_w, y_w, cv=5)

print(f"\n{name}:")

print(f" Train Accuracy: {train_acc:.4f}")

print(f" Test Accuracy: {test_acc:.4f}")

print(f" CV Mean ± Std: {cv_scores.mean():.4f} ± {cv_scores.std():.4f}")

# Feature importance visualization

feat_imp = pd.DataFrame({

'Feature': wine.feature_names,

'Importance': rf_model.feature_importances_

}).sort_values('Importance', ascending=True)

plt.figure(figsize=(10, 8))

colors = plt.cm.viridis(np.linspace(0.3, 0.9, len(feat_imp)))

plt.barh(feat_imp['Feature'], feat_imp['Importance'],

color=colors, edgecolor='white')

plt.title('Random Forest Feature Importance — Wine Dataset', fontsize=14)

plt.xlabel('Importance Score')

plt.tight_layout()

plt.show()7. Support Vector Machine (SVM)

What It Is: SVM finds the optimal decision boundary (hyperplane) that maximally separates classes with the widest possible margin. The data points closest to the hyperplane — called support vectors — define the margin.

Key Concepts:

- Hyperplane — The decision boundary (a line in 2D, plane in 3D, hyperplane in higher dimensions)

- Margin — Distance between the hyperplane and the nearest data points from each class

- Support Vectors — Data points that lie on the margin boundaries

- Kernel Trick — Implicitly maps data to a higher-dimensional space where it becomes linearly separable

Common SVM Kernels:

| Kernel | Best For | Notes |

|---|---|---|

| Linear | Linearly separable data | Fast, interpretable |

| RBF (Gaussian) | Non-linear data | Most commonly used |

| Polynomial | Polynomial boundaries | Computationally expensive |

| Sigmoid | Neural network-like | Less commonly used |

When to Use:

- High-dimensional data (text classification, genomics)

- Clear margin of separation in the data

- Medium-sized datasets (SVMs scale poorly to very large datasets)

- When you need a maximum-margin classifier

from sklearn.svm import SVC

from sklearn.datasets import make_classification

from sklearn.inspection import DecisionBoundaryDisplay

# Compare SVM kernels

X_svm, y_svm = make_classification(

n_samples=300, n_features=2, n_informative=2,

n_redundant=0, n_clusters_per_class=1, random_state=42

)

X_tr_s, X_te_s, y_tr_s, y_te_s = train_test_split(

X_svm, y_svm, test_size=0.2, random_state=42

)

sc_svm = StandardScaler()

X_tr_sc = sc_svm.fit_transform(X_tr_s)

X_te_sc = sc_svm.transform(X_te_s)

kernels = ['linear', 'rbf', 'poly']

fig, axes = plt.subplots(1, 3, figsize=(18, 5))

print("=== SVM KERNEL COMPARISON ===")

for ax, kernel in zip(axes, kernels):

svm = SVC(kernel=kernel, C=1.0, gamma='scale', random_state=42)

svm.fit(X_tr_sc, y_tr_s)

test_acc = svm.score(X_te_sc, y_te_s)

print(f" {kernel.capitalize()} Kernel Accuracy: {test_acc:.4f}")

DecisionBoundaryDisplay.from_estimator(

svm, X_tr_sc, ax=ax, alpha=0.3,

cmap=plt.cm.RdYlBu, response_method='predict'

)

scatter = ax.scatter(

X_tr_sc[:, 0], X_tr_sc[:, 1],

c=y_tr_s, cmap=plt.cm.RdYlBu,

edgecolors='black', s=50, linewidth=0.5

)

ax.set_title(f'SVM {kernel.capitalize()} Kernel\nAccuracy: {test_acc:.4f}')

ax.grid(True, alpha=0.3)

plt.suptitle('SVM Decision Boundaries — Kernel Comparison', fontsize=14)

plt.tight_layout()

plt.show()8. K-Nearest Neighbors (KNN)

What It Is: KNN is a simple, non-parametric algorithm that makes predictions based on the K most similar training examples. For classification, it uses majority voting among K neighbors. For regression, it uses the average of K neighbors.

Also Read : Machine Learning Tutorial

How It Works:

- Store all training data points

- For a new data point, calculate distance to all training points

- Find the K nearest neighbors

- Return majority class (classification) or average value (regression)

Distance Metrics:

- Euclidean Distance — Straight-line distance (most common)

- Manhattan Distance — Sum of absolute differences

- Minkowski Distance — Generalization of both

- Cosine Similarity — For text and high-dimensional data

When to Use:

- Small to medium datasets

- When decision boundaries are irregular

- Recommendation systems

- Anomaly detection

Important: KNN has no explicit training phase — it’s a lazy learner. All computation happens at prediction time, making prediction slow for large datasets.

from sklearn.neighbors import KNeighborsClassifier

from sklearn.datasets import load_digits

# Handwritten digit recognition

digits = load_digits()

X_d, y_d = digits.data, digits.target

X_tr_d, X_te_d, y_tr_d, y_te_d = train_test_split(

X_d, y_d, test_size=0.2, random_state=42

)

sc_d = StandardScaler()

X_tr_d_sc = sc_d.fit_transform(X_tr_d)

X_te_d_sc = sc_d.transform(X_te_d)

# Find optimal K

k_values = range(1, 21)

train_scores, test_scores = [], []

for k in k_values:

knn = KNeighborsClassifier(n_neighbors=k, metric='euclidean')

knn.fit(X_tr_d_sc, y_tr_d)

train_scores.append(knn.score(X_tr_d_sc, y_tr_d))

test_scores.append(knn.score(X_te_d_sc, y_te_d))

optimal_k = k_values[test_scores.index(max(test_scores))]

print(f"=== KNN — DIGIT RECOGNITION ===")

print(f"Optimal K: {optimal_k}")

print(f"Best Test Accuracy: {max(test_scores):.4f}")

plt.figure(figsize=(10, 5))

plt.plot(k_values, train_scores, 'o-', color='blue',

label='Training Accuracy', linewidth=2)

plt.plot(k_values, test_scores, 's-', color='red',

label='Test Accuracy', linewidth=2)

plt.axvline(x=optimal_k, color='green', linestyle='--',

label=f'Optimal K={optimal_k}')

plt.xlabel('Number of Neighbors (K)')

plt.ylabel('Accuracy')

plt.title('KNN: Accuracy vs K Value — Digit Recognition')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()9. Naive Bayes Algorithm

What It Is: Naive Bayes is a probabilistic classifier based on Bayes’ Theorem with a “naive” assumption of conditional independence between features given the class label.

Bayes’ Theorem:

P(Class | Features) = P(Features | Class) × P(Class) / P(Features)Why “Naive”? The algorithm assumes all features are independent of each other given the class — which is rarely true in reality (hence “naive”). Despite this oversimplification, it works remarkably well in practice, especially for text.

Variants:

- Gaussian Naive Bayes — For continuous features with normal distribution

- Multinomial Naive Bayes — For discrete count features (text classification, word frequencies)

- Bernoulli Naive Bayes — For binary features

When to Use:

- Text classification (spam filtering, sentiment analysis, news categorization)

- Real-time prediction (extremely fast training and prediction)

- Very high-dimensional data

- When training data is limited

from sklearn.naive_bayes import MultinomialNB, GaussianNB

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.pipeline import Pipeline

# Text classification — spam detection

emails = [

"Free money! Click here to claim your prize now",

"Congratulations you won a lottery prize",

"URGENT: Your account has been compromised click now",

"Buy cheap medications online no prescription needed",

"Win big money prizes click this link",

"Hi, are we still meeting tomorrow for lunch?",

"Please review the attached project proposal",

"The quarterly report is ready for your review",

"Team meeting scheduled for Monday at 10am",

"Let me know when you're free to discuss the project",

"Your invoice for last month is attached",

"Thank you for attending the webinar yesterday"

]

labels = [1, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0]

# 1 = Spam, 0 = Not Spam

# Build text classification pipeline

text_clf = Pipeline([

('vectorizer', CountVectorizer(stop_words='english')),

('classifier', MultinomialNB(alpha=1.0))

])

text_clf.fit(emails, labels)

# Test on new emails

test_emails = [

"Claim your free prize money now",

"Please find the attached meeting notes",

"Win cash prizes click here immediately"

]

predictions = text_clf.predict(test_emails)

probabilities = text_clf.predict_proba(test_emails)

print("=== NAIVE BAYES — SPAM DETECTION ===")

for email, pred, prob in zip(test_emails, predictions, probabilities):

label = "🔴 SPAM" if pred == 1 else "✅ NOT SPAM"

confidence = max(prob) * 100

print(f"\nEmail: '{email[:50]}...'")

print(f"Prediction: {label} (Confidence: {confidence:.1f}%)")PART 2: Unsupervised Learning Algorithms

10. K-Means Clustering

What It Is: K-Means partitions data into K distinct clusters by iteratively assigning each data point to its nearest cluster centroid and updating centroids until convergence.

The Algorithm:

- Initialize K centroids randomly

- Assign each data point to the nearest centroid (Euclidean distance)

- Recalculate centroids as the mean of all points in each cluster

- Repeat steps 2–3 until centroids stop moving (convergence)

When to Use:

- Customer segmentation for targeted marketing

- Document clustering for topic modeling

- Image compression (color quantization)

- Anomaly detection (points far from all clusters)

- Preprocessing step for supervised learning

from sklearn.cluster import KMeans

from sklearn.datasets import make_blobs

from sklearn.metrics import silhouette_score

# Customer segmentation simulation

np.random.seed(42)

n_customers = 500

purchase_frequency = np.concatenate([

np.random.normal(2, 0.5, 150), # Low-frequency buyers

np.random.normal(8, 1.5, 200), # Regular buyers

np.random.normal(20, 3, 100), # Frequent buyers

np.random.normal(40, 5, 50) # VIP buyers

])

avg_spend = np.concatenate([

np.random.normal(500, 100, 150),

np.random.normal(2000, 300, 200),

np.random.normal(5000, 800, 100),

np.random.normal(15000, 2000, 50)

])

X_seg = np.column_stack([purchase_frequency, avg_spend])

# Find optimal K using Elbow + Silhouette

k_range = range(2, 9)

inertias, sil_scores = [], []

for k in k_range:

km = KMeans(n_clusters=k, random_state=42, n_init=10)

labels = km.fit_predict(X_seg)

inertias.append(km.inertia_)

sil_scores.append(silhouette_score(X_seg, labels))

fig, axes = plt.subplots(1, 2, figsize=(14, 5))

axes[0].plot(k_range, inertias, 'o-', color='navy', linewidth=2)

axes[0].set_title('Elbow Method', fontsize=13)

axes[0].set_xlabel('Number of Clusters (K)')

axes[0].set_ylabel('Inertia')

axes[0].grid(True, alpha=0.3)

axes[1].plot(k_range, sil_scores, 's-', color='green', linewidth=2)

axes[1].set_title('Silhouette Score (Higher = Better)', fontsize=13)

axes[1].set_xlabel('Number of Clusters (K)')

axes[1].set_ylabel('Silhouette Score')

axes[1].grid(True, alpha=0.3)

plt.suptitle('K-Means: Optimal K Selection', fontsize=14)

plt.tight_layout()

plt.show()

# Final model with K=4

km_final = KMeans(n_clusters=4, random_state=42, n_init=10)

cluster_labels = km_final.fit_predict(X_seg)

segment_names = {0: 'Low-Value', 1: 'Regular', 2: 'High-Value', 3: 'VIP'}

colors = ['#e74c3c', '#3498db', '#2ecc71', '#f39c12']

plt.figure(figsize=(12, 8))

for cluster_id in range(4):

mask = cluster_labels == cluster_id

plt.scatter(X_seg[mask, 0], X_seg[mask, 1],

c=colors[cluster_id], s=60, alpha=0.7,

label=f'Cluster {cluster_id}: {np.sum(mask)} customers',

edgecolors='white', linewidth=0.5)

plt.scatter(km_final.cluster_centers_[:, 0],

km_final.cluster_centers_[:, 1],

c='black', marker='*', s=400, zorder=10, label='Centroids')

plt.xlabel('Purchase Frequency (monthly)')

plt.ylabel('Average Spend (₹)')

plt.title('K-Means Customer Segmentation (K=4)', fontsize=14)

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()11. DBSCAN (Density-Based Spatial Clustering)

What It Is: DBSCAN (Density-Based Spatial Clustering of Applications with Noise) groups together points that are closely packed, marking points in low-density regions as outliers/noise.

Key Parameters:

- eps (ε) — Maximum distance between two points to be considered neighbors

- min_samples — Minimum number of points required to form a dense region (core point)

Advantages over K-Means:

- Does NOT require specifying the number of clusters upfront

- Can find clusters of arbitrary shape (K-Means assumes spherical clusters)

- Robust to outliers — marks them as noise (-1)

- Identifies clusters that K-Means misses in non-convex shapes

from sklearn.cluster import DBSCAN

from sklearn.datasets import make_moons, make_circles

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

fig.suptitle('DBSCAN vs K-Means on Non-Convex Data', fontsize=14)

datasets = [

make_moons(n_samples=300, noise=0.05, random_state=42),

make_circles(n_samples=300, noise=0.05, factor=0.4, random_state=42)

]

for row, (X_data, _) in enumerate(datasets):

# K-Means

km_labels = KMeans(n_clusters=2, random_state=42).fit_predict(X_data)

axes[row, 0].scatter(X_data[:, 0], X_data[:, 1],

c=km_labels, cmap='Set1', s=40, alpha=0.8)

axes[row, 0].set_title(f'{"Moons" if row==0 else "Circles"}: K-Means')

axes[row, 0].grid(True, alpha=0.3)

# DBSCAN

db_labels = DBSCAN(eps=0.3, min_samples=5).fit_predict(X_data)

n_clusters = len(set(db_labels)) - (1 if -1 in db_labels else 0)

n_noise = list(db_labels).count(-1)

axes[row, 1].scatter(X_data[:, 0], X_data[:, 1],

c=db_labels, cmap='Set1', s=40, alpha=0.8)

axes[row, 1].set_title(

f'{"Moons" if row==0 else "Circles"}: DBSCAN\n'

f'Clusters: {n_clusters}, Noise: {n_noise}'

)

axes[row, 1].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()12. Principal Component Analysis (PCA)

What It Is: PCA is a dimensionality reduction technique that transforms high-dimensional data into a lower-dimensional representation while preserving as much variance (information) as possible.

How It Works:

- Standardize the data

- Compute the covariance matrix

- Find eigenvectors (principal components) and eigenvalues

- Sort principal components by explained variance (descending)

- Project data onto the top K principal components

When to Use:

- Reduce dimensionality before applying ML algorithms

- Visualize high-dimensional data in 2D/3D

- Remove noise from data

- Handle multicollinearity in features

- Compress data while preserving structure

from sklearn.decomposition import PCA

from sklearn.preprocessing import StandardScaler

# Dimensionality reduction on Wine dataset

wine = load_wine()

X_pca = wine.data

y_pca = wine.target

sc_pca = StandardScaler()

X_pca_scaled = sc_pca.fit_transform(X_pca)

print(f"Original dimensions: {X_pca.shape[1]} features")

# Explained variance analysis

pca_full = PCA()

pca_full.fit(X_pca_scaled)

cumulative_variance = np.cumsum(pca_full.explained_variance_ratio_)

plt.figure(figsize=(12, 5))

plt.subplot(1, 2, 1)

plt.bar(range(1, len(pca_full.explained_variance_ratio_) + 1),

pca_full.explained_variance_ratio_ * 100,

color='steelblue', alpha=0.7, edgecolor='black')

plt.plot(range(1, len(cumulative_variance) + 1),

cumulative_variance * 100, 'ro-', linewidth=2)

plt.axhline(y=95, color='green', linestyle='--',

label='95% Variance Threshold')

plt.xlabel('Principal Component')

plt.ylabel('Explained Variance (%)')

plt.title('Scree Plot — PCA Explained Variance')

plt.legend()

plt.grid(True, alpha=0.3)

# 2D visualization

pca_2d = PCA(n_components=2)

X_pca_2d = pca_2d.fit_transform(X_pca_scaled)

plt.subplot(1, 2, 2)

colors_pca = ['#e74c3c', '#3498db', '#2ecc71']

for class_id, color in enumerate(colors_pca):

mask = y_pca == class_id

plt.scatter(X_pca_2d[mask, 0], X_pca_2d[mask, 1],

c=color, label=wine.target_names[class_id],

s=60, alpha=0.8, edgecolors='white')

plt.xlabel(f'PC1 ({pca_2d.explained_variance_ratio_[0]*100:.1f}% variance)')

plt.ylabel(f'PC2 ({pca_2d.explained_variance_ratio_[1]*100:.1f}% variance)')

plt.title('PCA: Wine Dataset in 2D\n'

f'(Total variance: {sum(pca_2d.explained_variance_ratio_)*100:.1f}%)')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

n_95 = np.argmax(cumulative_variance >= 0.95) + 1

print(f"Components needed for 95% variance: {n_95} (from {X_pca.shape[1]})")

print(f"Dimensionality reduction: {X_pca.shape[1]}D → {n_95}D")PART 3: Ensemble Learning Algorithms

Ensemble methods combine multiple models to produce better performance than any individual model. They are among the most powerful types of machine learning algorithms available.

13. Gradient Boosting Algorithms (XGBoost, LightGBM, CatBoost)

What It Is: Gradient Boosting builds an ensemble of weak learners (shallow trees) sequentially. Each new tree corrects the errors (residuals) made by the previous ensemble. The final prediction is the sum of all tree predictions.

Three Leading Implementations:

| Library | Developer | Key Advantage |

|---|---|---|

| XGBoost | DMLC | Regularization, speed, Kaggle winner |

| LightGBM | Microsoft | Extremely fast, low memory, large datasets |

| CatBoost | Yandex | Handles categorical features natively |

from sklearn.ensemble import GradientBoostingClassifier, AdaBoostClassifier

from sklearn.datasets import load_breast_cancer

bc = load_breast_cancer()

X_bc, y_bc = bc.data, bc.target

X_tr_bc, X_te_bc, y_tr_bc, y_te_bc = train_test_split(

X_bc, y_bc, test_size=0.2, random_state=42

)

boosting_models = {

'AdaBoost': AdaBoostClassifier(n_estimators=100, random_state=42),

'Gradient Boosting': GradientBoostingClassifier(

n_estimators=100, learning_rate=0.1, max_depth=3, random_state=42

)

}

print("=== BOOSTING ALGORITHMS — CANCER DETECTION ===")

for name, model in boosting_models.items():

model.fit(X_tr_bc, y_tr_bc)

train_acc = model.score(X_tr_bc, y_tr_bc)

test_acc = model.score(X_te_bc, y_te_bc)

cv = cross_val_score(model, X_bc, y_bc, cv=5).mean()

print(f"\n{name}:")

print(f" Train Accuracy: {train_acc:.4f}")

print(f" Test Accuracy: {test_acc:.4f}")

print(f" 5-Fold CV Mean: {cv:.4f}")PART 4: Reinforcement Learning Algorithms

14. Q-Learning

What It Is: Q-Learning is a model-free reinforcement learning algorithm that learns a policy telling an agent what action to take in each state to maximize cumulative future reward.

The Q-Table: Q-Learning maintains a Q-table — a matrix of Q-values representing the expected future reward for taking action A in state S.

The Bellman Equation:

Q(s,a) ← Q(s,a) + α[r + γ·max Q(s',a') - Q(s,a)]Where α is the learning rate, r is the immediate reward, γ is the discount factor, and s’ is the next state.

Real-World Applications:

- Game playing (Chess, Go, Atari games)

- Robot navigation and locomotion

- Autonomous vehicle path planning

- Dynamic pricing optimization

- Resource scheduling in cloud computing

Algorithm Selection Guide — Choosing the Right Algorithm

| Problem Type | Small Dataset | Medium Dataset | Large Dataset |

|---|---|---|---|

| Binary Classification | Logistic Reg, SVM | Random Forest, XGBoost | Neural Networks, LightGBM |

| Multi-class Classification | Decision Tree, KNN | Random Forest, XGBoost | Deep Learning, CatBoost |

| Regression | Linear Regression | Random Forest | XGBoost, Neural Networks |

| Clustering | K-Means, DBSCAN | K-Means | Mini-batch K-Means |

| Text Classification | Naive Bayes | SVM, Logistic Reg | BERT, Transformers |

| Image Classification | SVM | CNN | Deep CNN, ResNet |

| Anomaly Detection | IsolationForest | DBSCAN | Autoencoder |

| Dimensionality Reduction | PCA | PCA, t-SNE | Autoencoders |

The Golden Rule of Algorithm Selection

Start simple, add complexity only when needed:

- Baseline first — Linear/Logistic Regression, Decision Tree

- Add power — Random Forest, XGBoost

- Go deep if needed — Neural Networks, Deep Learning

- Always cross-validate — Don’t judge by training accuracy alone

Comparison of All Major ML Algorithms

| Algorithm | Type | Interpretable | Needs Scaling | Handles Missing | Training Speed | Prediction Speed |

|---|---|---|---|---|---|---|

| Linear Regression | Supervised | ✅ High | ✅ Yes | ❌ No | ⚡ Very Fast | ⚡ Very Fast |

| Logistic Regression | Supervised | ✅ High | ✅ Yes | ❌ No | ⚡ Very Fast | ⚡ Very Fast |

| Decision Tree | Supervised | ✅ High | ❌ No | ❌ No | ⚡ Fast | ⚡ Fast |

| Random Forest | Supervised | ⚠️ Medium | ❌ No | ⚠️ Partial | 🐌 Moderate | 🐌 Moderate |

| SVM | Supervised | ❌ Low | ✅ Yes | ❌ No | 🐌 Slow | 🐌 Moderate |

| KNN | Supervised | ⚠️ Medium | ✅ Yes | ❌ No | ⚡ None | 🐌 Very Slow |

| Naive Bayes | Supervised | ✅ High | ❌ No | ✅ Yes | ⚡ Very Fast | ⚡ Very Fast |

| XGBoost | Supervised | ⚠️ Medium | ❌ No | ✅ Yes | 🐌 Moderate | ⚡ Fast |

| K-Means | Unsupervised | ✅ High | ✅ Yes | ❌ No | ⚡ Fast | ⚡ Fast |

| DBSCAN | Unsupervised | ⚠️ Medium | ✅ Yes | ❌ No | 🐌 Slow | 🐌 Slow |

| PCA | Unsupervised | ⚠️ Medium | ✅ Yes | ❌ No | ⚡ Fast | ⚡ Fast |

| Neural Networks | Supervised | ❌ Low | ✅ Yes | ⚠️ Partial | 🐌 Very Slow | ⚡ Fast |

Frequently Asked Questions — Types of Machine Learning Algorithms

Q1: How many types of machine learning algorithms are there? There are broadly three main types based on learning approach: Supervised, Unsupervised, and Reinforcement Learning. Within these, there are dozens of individual algorithms — Linear Regression, Logistic Regression, Decision Trees, Random Forest, SVM, KNN, Naive Bayes, K-Means, DBSCAN, PCA, XGBoost, Neural Networks, and many more, each suited to specific problem types.

Q2: Which machine learning algorithm is best for beginners? Linear Regression and Logistic Regression are the best starting points — they’re simple, interpretable, and teach fundamental concepts. Decision Trees are also beginner-friendly as they produce visualizable, explainable results.

Q3: Which ML algorithm is most accurate? There’s no single “most accurate” algorithm — it depends entirely on the dataset and problem. In practice, gradient boosting algorithms (XGBoost, LightGBM) consistently perform best on tabular data, while deep neural networks excel on images, text, and audio.

Q4: Do I need to know math to understand ML algorithms? Basic understanding of statistics (mean, variance, probability), linear algebra (vectors, matrices), and calculus (derivatives for gradient descent) helps tremendously. However, you can use scikit-learn and TensorFlow without deep mathematical mastery, especially when starting out.

Q5: What’s the difference between bagging and boosting? Bagging (Random Forest) builds multiple trees in parallel on random data subsets and averages predictions — reduces variance. Boosting (XGBoost, GradientBoosting) builds trees sequentially, with each tree correcting the previous ones’ errors — reduces both bias and variance.

Q6: When should I use clustering instead of classification? Use classification when you have labeled data and know the categories in advance. Use clustering when you have unlabeled data and want to discover natural groupings — you don’t know the categories beforehand.

Q7: Is deep learning always better than traditional ML algorithms? No. For structured/tabular data, gradient boosting algorithms (XGBoost, LightGBM) often outperform deep learning while being faster to train and easier to interpret. Deep learning shines on unstructured data — images, text, audio, video — and very large datasets.

Conclusion — Mastering the Types of Machine Learning Algorithms

Understanding the types of machine learning algorithms is the fundamental skill that separates effective ML practitioners from those who blindly apply techniques without understanding why. In this comprehensive guide, we’ve covered:

- Supervised Regression: Linear Regression, Polynomial Regression, Ridge/Lasso

- Supervised Classification: Logistic Regression, Decision Trees, Random Forest, SVM, KNN, Naive Bayes

- Unsupervised Clustering: K-Means, DBSCAN, Hierarchical Clustering

- Dimensionality Reduction: PCA, t-SNE

- Ensemble Methods: Random Forest (Bagging), XGBoost/LightGBM (Boosting), Stacking

- Reinforcement Learning: Q-Learning and Deep RL approaches

- Algorithm Selection Guide — how to choose the right algorithm for your problem

- Comprehensive Comparison Table — across all key dimensions

Each algorithm is a powerful tool with its own strengths, weaknesses, and ideal use cases. The most successful ML practitioners don’t try to memorize which algorithm is “best” — they develop an intuition for matching algorithms to problems through experience, experimentation, and a solid understanding of the principles behind each approach.

The journey to mastering the types of machine learning algorithms is one of the most rewarding paths in modern technology. The skills you build will empower you to solve real-world problems, build intelligent systems, and contribute to one of the most transformative fields in human history.

At elearncourses.com, we offer expert-led, project-based machine learning courses covering all types of ML algorithms — from beginner foundations through advanced deep learning, NLP, and computer vision. With hands-on coding labs, real-world projects, and industry certifications, our courses prepare you to apply ML algorithms confidently in any professional setting.

Start mastering machine learning algorithms today — the intelligent future is being built right now, and you can be part of it.